Blog/Xen performance using NUMA on EPYC CPUs

2021-04-06: Improving Xen performance with NUMA on EPYC CPUs[edit | edit source]

AMD's Core Complex (CCX) design means that a group of CPU cores within the processor share the same cache. The cache latency within one CCX is greatly improved over traditional design. One drawback is that there is a latency penalty for a core to access data in a non-local CCX cache. This can harm performance in some applications.

Depending on the CPU model the CCX size + cache size is as follows:

EPYC 7001 = 4 Cores, 8MB cache EPYC 7002 = 4 Cores, 16MB cache EPYC 7003 = 8 Cores, 32MB cache

Xen NUMA Aware Scheduler[edit | edit source]

Xen has a NUMA aware CPU scheduler since xen-4.3. This enables Xen to place virtual CPUs for individual VMs within a single single NUMA node. This can greatly increases the cache-hit rate and performance of VMs.

Last-Level Cache as NUMA Node[edit | edit source]

On AMD EPYC servers there is a BIOS setting to enable Last-Level Cache (LLC) as NUMA Node (sometimes called CCX as NUMA Domain). This creates a virtual NUMA domain per CCX which then the Xen CPU scheduler can use to group VM's virtual CPUs.

Benchmarking NUMA mode with Ubuntu on XCP-ng server 8.2[edit | edit source]

The following benchmarks were done using a Ubuntu 20.10 VM with 12 cores on a XCP-ng server on a EPYC 7402P 24 core/48 thread CPU.

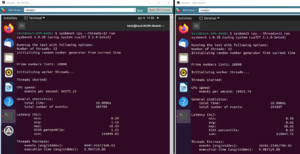

Sysbench[edit | edit source]

Sysbench increased 87%

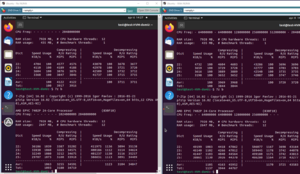

7-Zip[edit | edit source]

7-Zip increased almost 28%

SIMULIA Abaqus FAE[edit | edit source]

Lastly I asked a colleague to do a some heavy calculations with SIMULIA Abaqus using 4 cores on a Windows Server 2016 VM. The simulation run went down from 75 minutes to 60 minutes - a 20% boost in performance!