Blog/Deinterlacing Properly

2021-02-27: Proper Deinterlacing[edit | edit source]

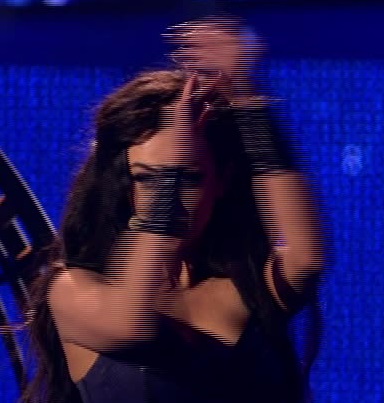

There are lots of guides on the internet about what interlacing is and how to deal with it on computer screens. Here is a interlaced video frame, showing the typical combe-pattern clearly visible.

Background[edit | edit source]

Normal video material (meant for TV broadcasts) is recorded in either 60 (NTSC) or 50 (PAL) picture frames per second.

When we store those frames as interlaced video, what we actually do is halving the horizontal resolution and store two pictures together in one frame. Each picture is referred to as a field.

During playback, every second row is taken from the second field. This is what leads to the interlacing pattern known as combing. This happens because because each source picture was recorded 20ms apart. Objects simple moved between each shot and do not line up when rendering the video stream.

Deinterlacing video[edit | edit source]

The solution to the combing issue is to scale up each field to a full frame and render them at the original frame rate, just as they were recorded.

If we were to convert to 25p, with advanced deinterlacing filters to remove combing, as most current software does, we would loose out on temporal resolution instead.

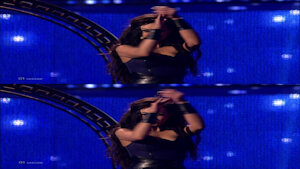

Look at her hands in the example below. In 25p mode we clearly see the missing movement.

Using ffmpeg[edit | edit source]

FFmpeg is a great tool to process video. It can do many forms of deinterlacing. One of the best option is bwdif which is an advanced motion compensated deinterlacer that can recover some of the missing horizontal resolution by looking at adjacent fields.

This will convert the source video using bwdif deinterlacing. It will use ffmpeg's default compression with the h264 video codec.

# ffmpeg -i source_video_50i.mp4 -vf bwdif=1 output_video_50p.mp4